Background

A few weeks ago, I commented on an interesting LinkedIn thread about independence and common cause failure. The thread was started by Dr. Sanjeev Saraf (see his blog Risk Safety Reliability).

In the original post, there was a comment that: “typically, common cause is quantified as 2-5% of the failure rate (referred as Beta)”.

In my experience with safety instrumented systems, this is a true statement. But is it correct? Meaning, “everyone” does it, but are they doing the right thing?

SINTEF Common Cause Report

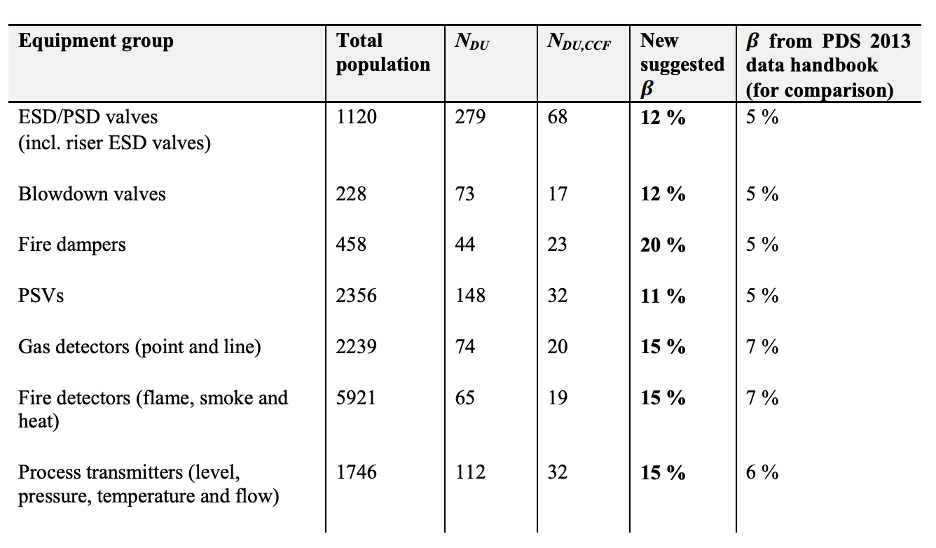

I posted a link to a 2015 SINTEF report Common Cause Failure in Safety Instrumented Systems. Based on actual field data, this report gives some surprisingly high estimates for common cause Beta factors:

Those new suggested Beta values (column 5) may come as a surprise to those that are accustomed to just slapping 2% into their favorite SIL calculation software.

But how surprising are these numbers, really?

Origins of Typical Practice

Before discussing other sources, where did “typical practice” come from for SIS common cause? The most widely available practical resource is the non-normative guidance in IEC 61508-6 Annex D.

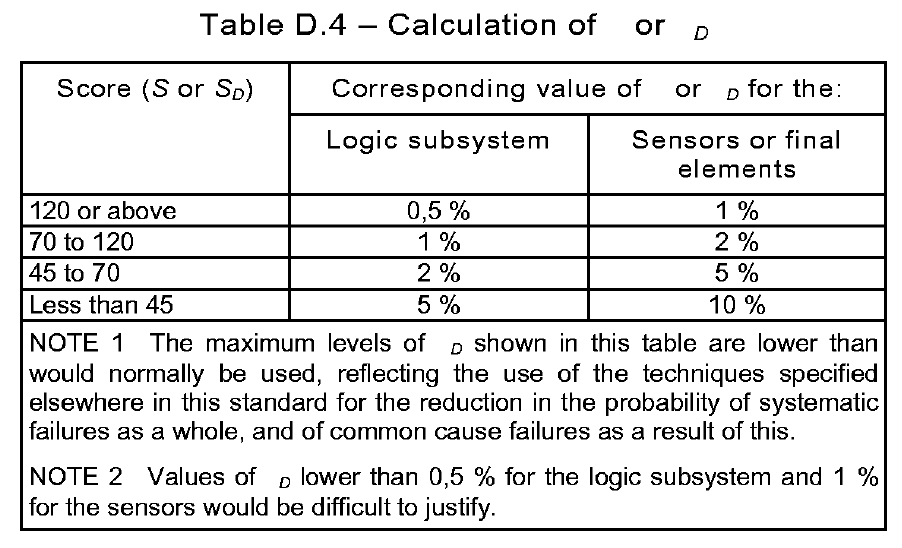

Annex D proposes a set of checklists that are scored based on the different procedural and design safeguards that are built into the system. Based on the final cumulative score, a recommended Beta factor can be looked up from the final table:

Interesting, Note 1 in the table even says the values in the table are “lower than would normally be used”.

I will make two observations about this approach:

(1) It is design-side only: The evaluation is done by an engineer during design phase while preparing SIL calculations. It does not reflect the “reality” of inputs from construction, operations, or maintenance of the system.

(2) It encourages a kind of circular thinking: Companies that have poor safety practices are likely to overestimate the effectiveness of their operations and maintenance, leading to a low Beta estimate, which allows for a less robust design. (this is the type of effect that I think is estimated in the exida “site safety index”)

NASA Common Cause and Beta

The NASA Probabilistic Risk Assessment Procedures Guide has an informative section on common cause (CC) failure analysis. Near the end of the chapter (in section 7.8) they suggest that if no other data is available, a generic factor of Beta = 10% may be appropriate. In a separate study for the Space Shuttle program, they estimated a generic factor of Beta = 13%. Pretty high! But I guess the oil & gas & chemical industries are just more scrupulous in eliminating CC failures than those cowboys at NASA, amiright?

Some commenters in the LinkedIn thread pointed out the inherent difficulties and uncertainties in estimating Beta factors. This is undeniably true. But does that mean we shouldn’t try to do it? I will answer with an analogy:

Dad: "Teaching our son to drive is hard, and he may not be a good driver

when I am done. So, I just won't do it."

Mom: "So he won't get a driver's license?"

Dad: "Oh... no, he's out driving right now..."

Nuclear Common Cause

As usual, if we want to find the most rigorous treatment of a safety and risk problem, we can turn to the nuclear industry.

- NUREG/CR 4780 – Procedures for Treating Common Cause Failures in Safety and Reliability Studies (unfortunately not freely available online)

- NUREG/CR-5485 – Guidelines on Modeling Common-Cause Failures in Probabilistic Risk Assessment

- NUREG/CR-6268 – Common-Cause Failure Database and Analysis System: Event Data Collection, Classification, and Coding

- IAEA/TE-648 – Procedures for conducting common cause failure analysis in probabilistic safety assessment (a shorter document)

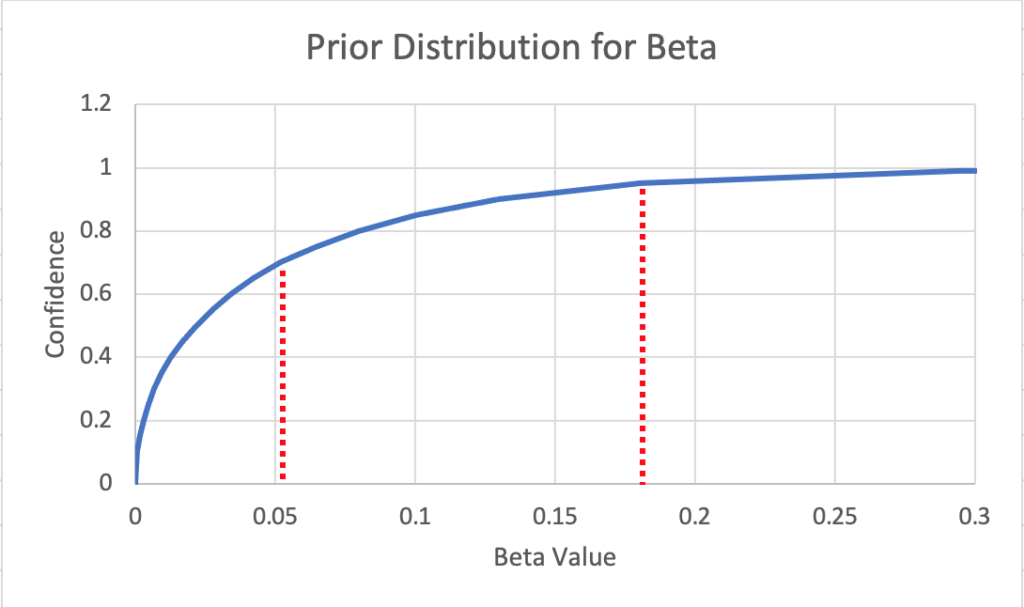

Given the sparse data and the need to consider uncertainty, it should be no surprise that the above documents propose a Bayesian approach. See Appendix D of CR-5485 above for the development of a hierarchical Bayesian model for CC failures. However, as a starting point when no additional data is available, they provide a generic prior distribution for Beta:

Based on the chart above, the 70% confidence level for Beta is 5.2%. But the 95% confidence level for Beta is 18.1%. Yikes!

To me, these numbers beg the question, is your plant more rigorous about common cause failures than a typical nuclear power plant?

A Side note: Systematic?

Someone rightly pointed out in the thread that CC failures are by and large systematic failures. I avoided the random vs. systematic debate in this post, but if you want to know my opinion, please check out this classic post: IEC 61511 is Wrong About Systematic Failures.

Conclusion

Bottom line, if you are a Functional Safety engineer struggling with how to estimate common cause Beta factors, you are not alone.

Unfortunately, the literature on CC in functional safety and SIS is limited and mostly paywalled. The IEC 61508 guidance is useful, but may lead to over-confidence if IEC 61508 practices are not rigorously followed. However, SINTEF, NASA, and NUREG provide helpful (if complex) guidance based on real-world data.

If you do not have the time or resources for a data-driven approach, then the generic numbers from SINTEF and NASA are worth a look. Does the old “2-5%” approach hold up in light of this information?

For a more data-driven approach, the nuclear industry has paved the way with a Bayesian approach for common cause estimation that blends expert knowledge with field data. For a similar approach applied to SIS prior use, please see my paper Hierarchical Bayesian Prior Use.

Remember, common cause is not something we just estimate and file on a shelf. To a certain extent, CC can be controlled via good design, engineering practices, and operational procedures. The data-driven approaches above can close the loop and allow continual monitoring and improvement.

Thanks for reading! What would you add to the common cause discussion?